安装ollama

下载:https://ollama.com/download/OllamaSetup.exe

下载以后双击安装。

启动模型

参考文档:

https://ollama.fan/getting-started/#model-library

https://ollama.com/library

https://ollama.com/library/qwen2

启动模型:

ollama run qwen2:0.5b

聊天对话

参考文档:

https://ollama.fan/getting-started/examples/001-python-simplechat/

创建虚拟环境:

conda create --name langchain python=3.12

main.py

import json

import requests

model = "qwen2:0.5b"

def chat(messages):

r = requests.post(

"http://127.0.0.1:11434/api/chat",

json={"model": model, "messages": messages, "stream": True},

)

r.raise_for_status()

output = ""

for line in r.iter_lines():

body = json.loads(line)

if "error" in body:

raise Exception(body["error"])

if body.get("done") is False:

message = body.get("message", "")

content = message.get("content", "")

output += content

# the response streams one token at a time, print that as we receive it

print(content, end="", flush=True)

if body.get("done", False):

message["content"] = output

return message

def main():

messages = []

while True:

user_input = input("Enter a prompt: ")

if not user_input:

exit()

print()

messages.append({"role": "user", "content": user_input})

message = chat(messages)

messages.append(message)

print("\n\n")

if __name__ == "__main__":

main()

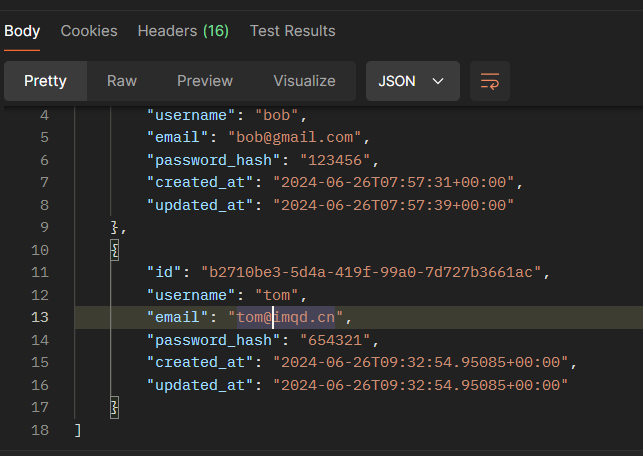

接口文档

https://ollama.fan/reference/api/#json-mode